Examining the Prospects of Generative AI from the Gaming Industry’s Perspective

Posted Date – 12:30 AM, Mon – 6/19/23

Organizations like Ubisoft have developed their own proprietary AI models to program in-game dialogue.

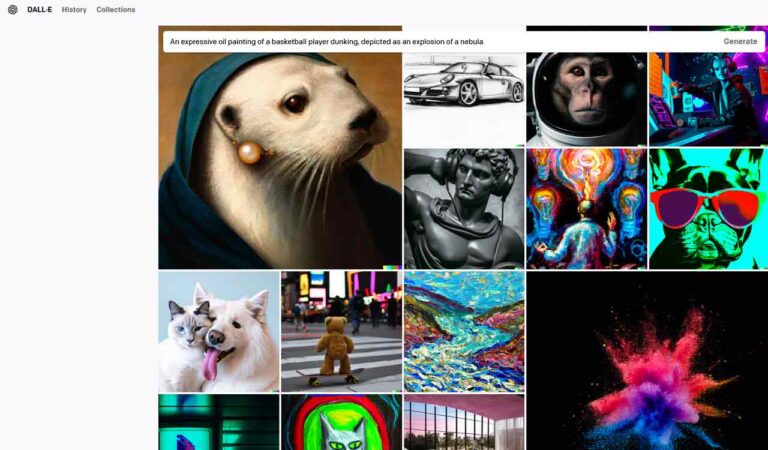

The past six months have seen such topics as “ChatGPT”, “AI Search”, “Google Bard”, “Dall-E”, “Stable Diffusion”, “Large Language Models (LLM)” and “Generative AI.”

As much of the world and its workplaces try to understand what exactly this technology can offer them and the fields in which they work, many of the functions and features of these AI models have been open for testing for several months now.

Since March, I’ve regularly interacted with Google’s Bard, Microsoft’s AI-integrated Bing search, and Google’s Bard to explore not just the results they produce, but how they perceive reality. This week’s column combines some of my observations with some estimates of how exactly AI models can help the game production process.

Sift through large volumes of data and information

The belief that large language models (LLMs) can provide concise and informative text that enables rapid understanding of any given topic is a common stereotype. However, it is often observed that the output generated by AI systems can be too vague and extremely general. This discrepancy can best be explained by the AI models’ reliance on search engine results and cached web-based information to generate their responses.

As a result, when you seek information from an AI model, the results often reflect a compilation of common terms relevant to your query rather than the specific topic at hand. This also clarifies the existence of fictitious references in systems like ChatGPT.

AI can help game writing

This belief is so prevalent that organizations like Ubisoft have developed their own proprietary AI models to program in-game dialogue. The industry’s belief in the success of this model has led most game organizations to wonder if they’ll even need writers and character development teams in the future.

I experimented extensively with game dialogue writing in ChatGPT and Google Bard. I asked them to create five female characters for a dating sim set in a bar over a weekend. I prompted the protagonist of the model game to be an introvert and asked them to provide possible settings for how the protagonist and female characters might interact.

The results were surprisingly biased by race and description. Both models produced five white characters, all rooted in the United States. When I asked for character diversity, I got five characters of different races and ethnicities who still share the same North American/European name.

The more diversity cues I fed the system, the more confusing and unwieldy the results emerged. After nearly two hours, I barely made any progress, as the exercises barely produced usable dialogue sequences.

AI can generate game worlds and write code

This is the aspect that excites me the most, especially as I look at digital renditions of language-based cues on systems like Midjourney and OpenAI’s Dall-E.

The results are sometimes not only aesthetically pleasing and accurate, but also very imaginative. However, these renditions are controversial because they use existing work by artists across creative disciplines as the basis and benchmark for the AI to render its own work. Likewise, in coding, people try to get LLMs to code simple games like Pac-Man and Flappy Bird.

Human guidance and supervision are necessary during most of these exercises. Given the need for efficient and tight coding and other improvements to make games and platforms responsive, I don’t know if AI models can do these tests and reflective exercises.

As far as I know, AI-based models are far from revolutionizing a key process of game production. While their ability to create worlds and visual effects is important, it will be interesting to see if those graphics and visual effects can lead to playable sequences. I don’t think we’re far from people being unemployed.